Category: Philosophy

-

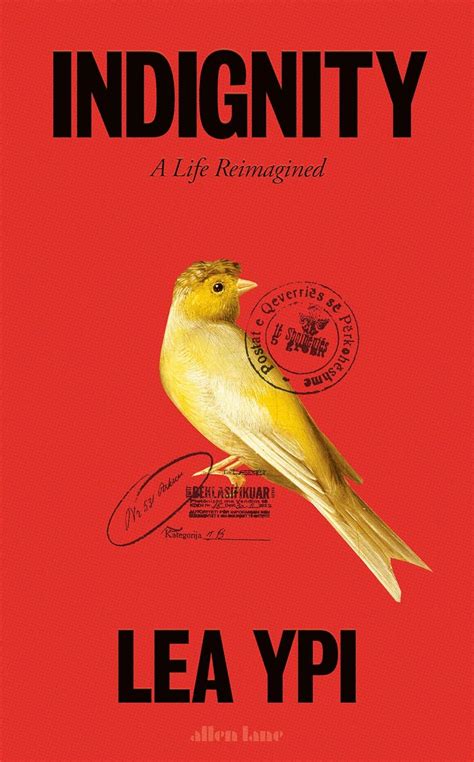

true imagination: Indignity by Lea Ypi

Sometimes the idea of a book far outshines the book itself.

-

keep shuffling those ideas around

Factorial math just makes the tarot look even more magical, to be honest.

-

on the valence of designed things

The context of a work of design tells the viewer something about the world that the design implies, and signals how seriously it should be taken.

-

on discernment

The really blunt way to put this would be to say that a bit of cultural elitism might not be a bad thing right now.